Have you ever wanted to use a strongly-typed collection to bind your data presentation controls to, only to find that you have very limited sorting capabilities, if any at all?

If you are trying to stick to good object-oriented design and shrink the amount of data that you keep in memory, transfer from your data source, or serialize to clients, you likely have run into this situation because you are using strongly-typed collections of your domain objects. So what do you do if you need to sort those collections for presentation or faster searching?

One common solution to the problem is to not use a collection when you need to sort the data and instead switch to using a DataSet. You can also choose to get data directly from the source already sorted and either bind directly to it (using a DataReader) or create another read-only collection. Unfortunately, this means potentially duplicating a lot of data in memory, creating greater coupling between the data and presentation tier, and increasing maintenance costs by having multiple points of contact between those tiers.

There is, thankfully, another solution, which is to make the custom collection sortable. The .NET Framework provides some functionality in this direction, but it has certain limitations. The ArrayList class supports a Sort method that has three overloads?one will sort by the IComparable implementation provided by the objects in the collection; another allows you to specify an IComparer to use for sorting, and the third overload allows the specification of an IComparer, a start index, and a count to sort only part of the list.

All three of these methods ultimately call the Array.Sort method, which has a very similar signature, and pass in the ArrayList's underlying Object array to be sorted using the specified IComparer (or Comparer.Default if none is specified). The Array.Sort method uses the QuickSort algorithm, which is widely agreed upon to be the fastest sorting algorithm since it sorts in O(N*log2N) time, which means that, on average, it will sort only to the logarithm (with a base of two) of the number of items times the number of items in the collection.

While using the QuickSort algorithm is great, the limitations of the provided framework are two-fold. First, to use this to sort a collection by one or more of the properties of its objects, you have to implement a custom IComparer for each property that you want to sort upon. Second, if you want to sort on multiple properties at once, providing IComparer implementations for each possible combination of properties would easily become ridiculously unmanageable.

For instance, an object with eight properties could have 40,320 permutations, not including varying the direction on each property sorted upon. If you multiply this by the number of different types in a particular domain, you can see how this is not a viable solution. Clearly, a different solution is necessary if you want to sort your collections, and that is where the solution in this article comes into play.

The Solution

My solution uses dynamic code generation, compilation, and execution to allow users to sort a custom object collection using the familiar syntax of T-SQL. This implementation allows the user to call a Sort method on the collection, and pass in a string that specifies the sort order, e.g., using “SomeProperty1, SomeProperty3 DESC” as the sort expression.

You can download the code now and start taking advantage of this capability, but I would like to spend some time explaining how this solution is possible and what the caveats are if you go this route.

The core enabling feature of this sortable collection is the dynamic creation of IComparer implementations through the GetMultiComparer method. In short, this method builds a string containing the code required for an IComparer implementation, uses the C# compiler to compile the code, generating a new assembly in memory, and finally uses Reflection to create an instance of the IComparer from the new assembly. Let me explain each of these steps in more depth.

IComparer Explained

First, consider Listing 1, which shows the code needed to create an implementation of IComparer that compares the values of a particular property on a particular type. There are two basic steps: the code first ensures that both objects being compared can be cast to the expected type (and that they're not null/Nothing). Next, the code calls the CompareTo method on the desired property of the first instance, passing in the value of the same property on the second instance. This technique demonstrates what is required to compare on one property, but what if you wanted to compare on two, three, four, or even more properties in the same comparer?

Listing 2 shows the multiple-property solution. All that is necessary is that you compare each property in the desired order, and after each comparison, you check to see if further comparison is necessary. This latter check is done by checking the result of the call to CompareTo, which, by the way, is an implementation of the IComparable interface?it's assumed that the properties being compared implement this interface.

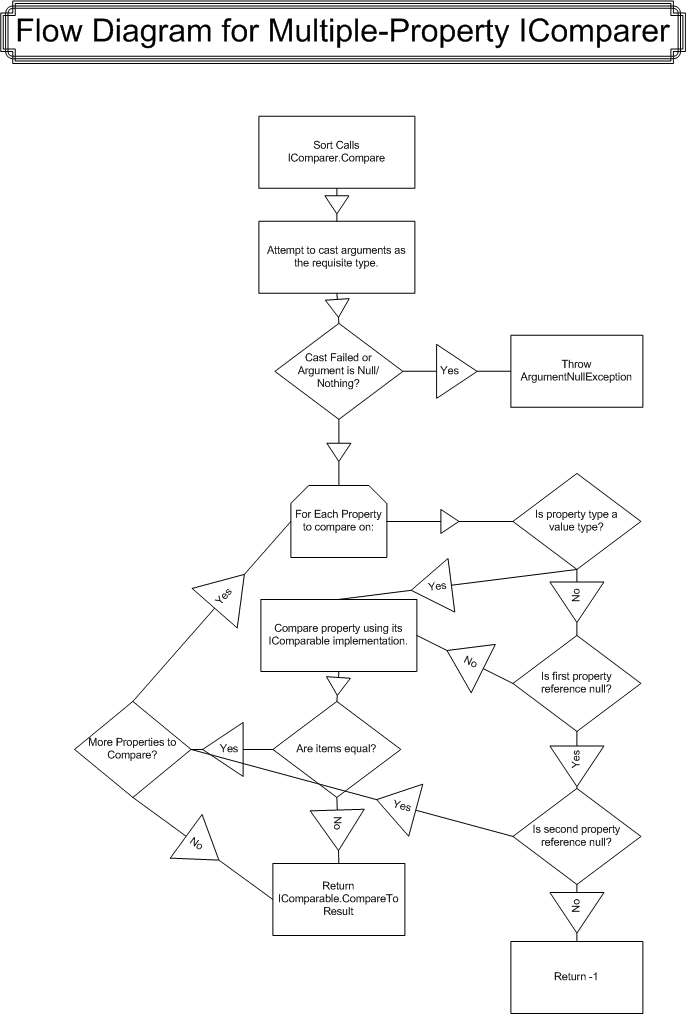

If the value returned by CompareTo is less than zero, it means the first instance is less than the second. If the value is greater than zero, it means that the first instance is greater, and if the value is zero, they are equal. You only need to compare further if the current comparison results in equality because, if it doesn't, that means you already know how to sort the two items being compared and can return that value as your result. If your compared values are equal, you need further comparisons to determine sort order. This logic is shown in Figure 1.

Notice that after the third comparison in Listing 2, the code returns -(result), if the comparison results in inequality. Use this syntax if you need that particular comparison to result in a descending sort order; it simply inverts the meaning of the result of CompareTo.

You can now see what would be necessary if you want to write each possible combination of property comparisons. Varying by ascending and descending directions on each property sort would only further increase the possible permutations. Obviously, you need a simpler solution, one that is easier to produce and easier to maintain.

You could, of course, have a code generator spit out the thousands of comparers for each type, but that would still be unmanageable. Another option would be to guess what your common sort operations might be and only write comparers for those. That, too, could get unmanageable and adding new sorts later would require recompilation and redistribution.

It makes sense to devise a way to dynamically generate comparers or to create a dynamic comparer. The latter would require extensive use of Reflection for each comparison, and on larger collections, this could noticeably impact performance. (In fact, a colleague of mine wrote about such a comparer on ASPAlliance.com. You can find it in the related resources under the title "Generic Comparer.") See http://aspalliance.com/461.

So this leaves dynamically creating comparers as you need them, and this is what I have chosen as the best solution. While it does have drawbacks, particularly if you do use many different permutations, I still think that it is clearly better than the alternatives for sorting custom object collections. Whether or not it is better than alternative means of getting sorted data (as described in the first part of this article) is up to you to decide.

Dynamically Creating Comparers

Now let's get into the nitty-gritty of implementing the solution to dynamically create comparers. Listing 3 shows the first part of the GetMultiComparer method, which you'll use to create the appropriate code that will provide you with the comparer you need to achieve the desired sort.

The first few lines lay the foundation, preparing the comparer name (simply the sort expression with the commas and spaces removed) and the full comparer type name, which is created by appending the comparer name onto the full type name of the object being compared, concatenated with “DynamicComparers.” The code uses the comparer name to create a unique namespace for the comparer types. You may think that these type names are ugly, but it also ensures that they are unique and predictable.

Next, the code checks the comparer cache to see if you have already created a comparer for this sort expression. The cache simply enhances performance; it is a Hashtable that keeps an instance of the comparer in memory so that the code doesn't have to recompile, execute, and instantiate a new comparer every time it's needed. If the comparer you need is in the cache, as you'll see later, the code simply retrieves that instance from the cache and returns it. For the purpose of explanation in this article, assume that the comparer is not in the cache.

Once the code determines that it doesn't already have an instance of the comparer in the cache, the code declares the basic template code for all the comparers using a couple of placeholders for the type name and comparer name. The template contains another placeholder for the actual comparison code, and that will vary for each sort expression.

At this point, the code loops through the individual properties in the sort expression. (Each property was split out of the sort expression using the comma delimiter.) For each property in the sort expression, it checks whether you specified ascending or descending by further splitting with a space delimiter (for example, “MyProperty ASC” would split into an array with “MyProperty” in the zero index and “ASC” in the one index). Comparing the one index of the resultant array to “DESC” determines the sort direction for that property. The code then appends the line to call CompareTo on the current property, capturing the result in the “result” integer and then checks to see if further comparison is required (as discussed previously with Listing 2 and Figure 1).

Once that loop is complete, you should have a StringBuilder with two lines for each property used in the sort expression?one line to compare and one line to determine if further comparison is needed. The code then appends a final return statement to ensure that all code paths return a value (in that case, the value would be zero, indicating equality for all comparisons), and the last line in Listing 3 replaces all of the placeholders in the template with the corresponding dynamic values. At this point you will have a complete implementation of IComparer as exemplified in Listing 2.

The next section of code, in Listing 4, demonstrates how to use the CSharpCodeProvider to create a compiler instance to (surprise!) compile the code you just created. The only tricky part of this section is getting the file path to each of the referenced assemblies. To do this automatically, i.e., without configuration files, you must use Reflection on the custom object's type (passed in as a parameter to this method) to get its assembly information and, subsequently, the referenced assemblies.

Unfortunately, the GetReferencedAssemblies method returns an array of AssemblyName objects, so you have to additionally use the GetAssemblyPath method (Listing 5) to load the referenced assemblies, since the code base location is not available from the AssemblyName instances provided. Loading the assembly uses runtime assembly probing to find the referenced assemblies, so it will be using the same code base that is being used by the executing assembly. The GetAssemblyPath method loads the assembly and uses another cache called assemblyPaths?this is so we don't have to load a particular assembly more than once to get its location.

Once you've added all of the assembly references, you use the CompileAssemblyFromSource method to compile your dynamically created code into Microsoft Intermediate Language (MSIL). You'll note that the code specifies that the compiler should generate the assembly in memory; this is done mostly for performance reasons?so it doesn't have to do any more file I/O than necessary. The compile method returns results that it uses to determine if there were any errors during compilation; this was particularly useful while debugging the dynamically-generated code and you can see that it builds an informational exception based on the compiler errors, if there are any.

Finally, assuming there were no errors, you now have a new assembly in memory that you can use to create an instance of your new comparer. To do this, you must use Reflection again (one more reason to cache the instance you create) by using the Assembly.CreateInstance method, which will load the assembly into the current AppDomain, if not already loaded, and instantiate the requested type. Assuming there are no errors, you now have a new, dynamically-created comparer, so you can add it to your cache and return it from the method to be used for sorting.

Using SortableCollectionBase

Now that you know how a multiple-property comparer is written and how to create them dynamically, it is time to discuss how to make practical use of them in an application. You could create a utility method that takes a collection and a sort expression that would in turn use the GetMultiComparer method with the ArrayList's Sort method to sort the given collection. Doing so would require that all of your collections either expose a public Sort method like the ArrayList's or a public accessor for the Collection's underlying ArrayList.

I suggest adding the SortableCollectionBase type to your libraries and deriving collections that you want to sort from that type. This provides familiar usage, like the DataView, that allows you to specify the sort expression on the collection type itself; it is also similar to the ArrayList in that respect. This suggestion also promotes good object-oriented design, since the action is taking place on the collection object. Use it as your collection base instead of System.Collections.CollectionBase.

In fact, I would recommend that you create a CollectionBase type in your own class hierarchy, deriving all of your collection types from that type and in turn deriving your CollectionBase from SortableCollectionBase. The reason for this is that if you decide you don't like my SortableCollectionBase, something better comes out in .NET v2, or I or someone else comes out with a better base class, you can more easily change the base of all your collections.

Of course, you will have to retain the methods that SortableCollectionBase provides (primarily GetNewView and Sort) in your new base, if you don't want to update all usage of your collection types that use these methods. You could, for instance, add the Sort method to your CollectionBase and have it call any new sort method you like (or even none at all). In any case, using your own CollectionBase is a good practice in general and will likely reduce maintenance in the long run.

Regardless of how you are deriving from SortableCollectionBase, doing so will provide you with the Sort method that simply takes a T-SQL-like sort expression (without the ORDER BY clause), and it also provides you with the GetNewView methods that will create a shallow copy of the current collection. The benefit of GetNewView is that you can have multiple views of the same collection of objects, with possibly different sorts, much like the DataView provides different views of the DataTable. It also gives you a thread-safe copy of the collection, which is useful if other threads might be manipulating the source collection at the same time.

I referred to GetNewView methods because there are three overloads. The first, which takes no parameters, will return a shallow copy SortableCollectionBase. The other two return an IList and take a System.Type parameter that should be the type of a collection that derives from SortableCollectionBase. The point of this abstraction is to make casting easier. The third overload also takes a sort expression that it will use to sort the new shallow copy, so you can get a new view with a different sort in one statement, which is quite useful for, e.g., binding to a data control. You could further extend the GetNewView method to also allow for filtering, extending the DataView analogy.

SortableCollectionBase in .NET 2.0

Before moving on to testing, I'd like to make a few observations about how this will work in .NET 2.0, currently known as “Whidbey.” As far as I can tell, Microsoft is not building this kind of sorting functionality into the Framework. This is probably because of the potential memory bloat and performance hit of the dynamic assembly creation versus the perceived benefit. Then again, maybe it just wasn't on their TO DO list.

In any case, what this means is that you can port the SortableCollectionBase to .NET 2.0 and pick up a few nice benefits. The first and most obvious benefit is that you can declare a type parameter (see http://www.15seconds.com/Issue/040525.htm for my article on generics if you're not familiar with this terminology). Doing this allows you to reuse the SortableCollectionBase for any type without having to declare collections for each type. In fact, the SortableCollection<T> example included in the sample code for this article inherits from System.Collections.Generic.List<T**>,** which provides you with all the functionality of that generic type and adds the sorting functionality available in SortableCollectionBase.

In addition, you could refactor the GetNewView method to return a SortableCollection<T**>,** eliminating the need for one of the overloads and the System.Type method parameter as well as the Reflection needed to instantiate the return type.

The only really tricky part I found in porting to .NET 2.0 was that the System.Collections.Generic.List<T>.Sort method requires a System.Collections.Generic.IComparer<T> as a parameter instead of the old standard IComparer, so I had to figure out how to pass a type argument “T” to the .NET 2.0 type parameter “T” via Reflection. On Google I found out about the BindGenericParameters method on the System.Type type. The following lines demonstrate what you need to do to pass type arguments via Reflection.

System.Type constructedType, genericType;

genericType = compiled.CompiledAssembly.GetType(

dynamicTypeName + "`1");

constructedType =

genericType.BindGenericParameters(

new Type[1] {typeof(T)});

comparer = constructedType.GetConstructor(

new Type[0] {}).Invoke(null)

as System.Collections.Generic.IComparer<T>;

As you can see, first you need to get an instance of the generic type. The backtick () plus integer indicates the arity of the generic type, that is, the number of type parameters that the generic type has. In this case, I only have one type parameter, so it is "1" for this example. Once you get the generic type instance, you call BindGenericParameters on it, passing in a System.Type array of types to use (the order of the array should match the order of the type parameters from left to right). BindGenericParameters returns the constructed type that you can use to create an instance of the new comparer.

Using generics means that you do not have to cast the type in the IComparer<T> implementation, but you do have to apply a constraint on the generic comparer that specifies it must be the type of your custom object so that you can access the properties of that type in your comparer. Thus, in reality, you really don't have much of a generic comparer in terms of reusability, but at least you gain a bit of performance by not casting.

Testing SortableCollectionBase

You now know all you need to know (and more) to get started sorting your custom object collections easily and quickly. The sample code with this article also includes a test project that has test fixtures you can use with NUnit 2.1 or 2.2. The test uses the Northwind database as a data source for collection data. It declares an Order class with a few properties (for expediency) that will be used to sort upon, and it declares an OrderCollection that inherits from SortableCollectionBase.

The sample code includes one test method (Listing 6), and this method serves a dual purpose. It first determines how much time it takes to sort the collection, and then it checks the validity of the sort. To test the validation, the test method compares the sort result of the SortableCollectionBase.Sort method (testGroup) with the sort result of the same sort expression passed to SQL Server (controlGroup).

The first line of the test Sort method calls ReloadValues, which simply opens up the SortableCollectionBase.xml file and retrieves relevant variable settings, such as the select statement, sort expression, connection string, and whether or not to test only the sorting time. The last value can save time when testing very large collections (so you don't have to create it twice and compare).

The Sort method creates an OrderCollection, calls the SortableCollectionBase.Sort method on it (timing how long this takes), and then, if validation is requested in the configuration file, it loads up the same items from SQL Server using the same sort expression and compares each item in the test group with those of the control group.

The key for the validation comparisons is to only compare the values that were sorted upon because they are the only ones guaranteed to be equal. Since the QuickSort algorithm is not stable, there is no guarantee that items that are equal (in terms of values compared in the sort) will remain in the order that they were in prior to the sort. So, for instance, if you had a collection of employees sorted by last name and you wanted to further sort them by department, there is no guarantee that after sorting by department they will still be sorted by last name. What this means is that you need to specify every sort criterion needed when calling the Sort method.

If you look closely at the test helper methods, you'll notice that I use a lot of Reflection. This is simply to add flexibility to the test code, so you can, for instance, change the sort expression in the configuration file without having to update the test code. If you find this confusing, just stick to actually running the tests by modifying the configuration file values, trying different sort expressions and different numbers of items selected.

You may also be asking yourself, if you're familiar with the Northwind database, how I can use that as a test source for large collections, since the largest item count is in the Orders database, which has around 850 records. The answer to this is to run the two included SQL scripts, first the OrdersAddEntropy.sql, which adds an “Entropy” column to the Orders table, and then the BloatOrder.sql script that increases the record count in the Orders table to over 100,000. The SQL scripts recursively insert all of the rows in the table seven times. The Entropy column is used to add some semi-random data into the rows, so you can use it as the last value in the sort expression to provide some uniqueness (since other than that, we're just looking at a bunch of copies of the original 850 rows). After running these, you should have a sufficiently large data source to build out some huge collections.

In my tests, I found that sorting a collection of 10,000 items took about 250 milliseconds on my 2.2 GHz Pentium 4M notebook. Sorting 100,000 items took only around 1.4-1.5 seconds, which is really quite fast all things considered; however, I wouldn't recommend keeping that many custom objects in memory as a general rule. I found that the retrieval and creation of the 100,000 items took about a minute, so you can see where the real bottleneck is in that situation. After working out a few quirks in the test logic, I found all tests validated, so the sorting logic is dependable as well as fast.

All in all, I was quite pleased with the final product in terms of dependability and speed. However, let me suggest a few caveats to consider. The main one is the already-mentioned potential memory bloat caused by creating a bunch of dynamic assemblies. Whether or not this is a problem will depend on the target hardware and the application itself, but generally I wouldn't expect it to be an issue except in large applications (applications with many domain objects and collections) with high use. In that case, I recommend profiling and testing to ensure using this approach will meet your performance goals.

The other caveat is more a question of architecture in that the usefulness of this facility will largely depend on whether or not your architecture can use sortable custom object collections. All the same, it wouldn't hurt to add this to your library to have if you ever do find a use for it - at least you won't throw your hands up in despair when you find you want to sort your collections. I know I'm glad to have this in my toolkit now!

You can download the source code at http://ur1123.com/wcsm2

Listing 1: Multiple propert IComparer

using System;

namespace Sample.Test.Collections

{

public sealed class ShipCountryComparer :

System.Collections.IComparer

{

public int Compare(object first, object second)

{

Sample.Test.Collections.Order firstInstance =

first as Sample.Test.Collections.Order;

if (firstInstance == null)

throw new ArgumentNullException(

"First object must be an instance of

Sample.Test.Collections.Order.", "first");

Sample.Test.Collections.Order secondInstance =

second as Sample.Test.Collections.Order;

if (secondInstance == null)

throw new ArgumentNullException(

"Second object must be an instance of

Sample.Test.Collections.Order.", "second");

return firstInstance.ShipCountry.CompareTo(

secondInstance.ShipCountry);

}

}

}

Listing 2: Sample dynamically generated comparer

using System;

namespace Sample.Test.Collections.OrderComparers

{

public sealed class

ShipCountryShipCityASCShippedDateDESCEntropyComparer :

System.Collections.IComparer

{

public int Compare(object first, object second)

{

Sample.Test.Collections.Order firstInstance =

first as Sample.Test.Collections.Order;

if (firstInstance == null)

throw new ArgumentNullException(

"First object must be an instance of

Sample.Test.Collections.Order.", "first");

Sample.Test.Collections.Order secondInstance =

second as Sample.Test.Collections.Order;

if (secondInstance == null)

throw new ArgumentNullException(

"Second object must be an instance of

Sample.Test.Collections.Order.", "second");

int result = 0;

result = firstInstance.ShipCountry.CompareTo(

secondInstance.ShipCountry);

if (result !=0) return (result);

result = firstInstance.ShipCity.CompareTo(

secondInstance.ShipCity);

if (result !=0) return (result);

result = firstInstance.ShippedDate.CompareTo(

secondInstance.ShippedDate);

if (result !=0) return -(result);

result = firstInstance.Entropy.CompareTo(

secondInstance.Entropy);

if (result !=0) return (result);

return result;

}

}

}

Listing 3: Generating the dynamic comparer code

string[] sorts = sortExpression.Split(',');

if (sorts.Length == 0)

throw new ArgumentException("No sorts were passed in the sort

expression.", "sortExpression");

string typeName = type.FullName;

string comparerName = sortExpression.Replace(",", "").Replace(" ",

"") + "Comparer", dynamicTypeName = typeName +

"DynamicComparers." + comparerName;

System.Collections.IComparer comparer = null;

if (!comparers.ContainsKey(dynamicTypeName))

{

string compareCode = @"

using System;

namespace [TypeName]DynamicComparers

{

public sealed class [ComparerName] : System.Collections.IComparer

{

public int Compare(object first, object second)

{

[TypeName] firstInstance = first as [TypeName];

if (firstInstance == null)

throw new ArgumentNullException(""First object must be an

instance of [TypeName]."", ""first"");

[TypeName] secondInstance = second as [TypeName];

if (secondInstance == null)

throw new ArgumentNullException(""Second object must be an

instance of [TypeName]."", ""second"");

int result = 0;

[CompareCode]

}

}

}

";

System.Text.StringBuilder compareBuilder =

new System.Text.StringBuilder();

string propertyName;

bool desc;

for (int sortIndex = 0; sortIndex < sorts.Length; sortIndex++)

{

if (sorts[sortIndex] == null)

continue;

string[] sortValues = sorts[sortIndex].Trim().Split(' ');

if (sortValues.Length == 0)

continue;

propertyName = sortValues[0].Trim();

PropertyInfo prop = type.GetProperty(propertyName);

if (prop == null)

throw new ArgumentException(

String.Format("Specified property '{0}' is

not a property of type '{1}'.",

propertyName, type.FullName), "sortExpression");

desc = (sortValues.Length > 1) ?

(sortValues[1].Trim().ToUpper() == "DESC") : false;

bool checkForNull = !prop.PropertyType.IsValueType;

if (checkForNull)

compareBuilder.Append("\t\t\tif (firstInstance." +

propertyName + " != null)\n\t");

compareBuilder.Append("\t\t\tresult = firstInstance." +

propertyName +

".CompareTo(secondInstance." + propertyName + ");");

if (checkForNull)

{

compareBuilder.Append("\t\t\telse if (secondInstance." +

propertyName + " == null)\n");

compareBuilder.Append(

"\t\t\t\tresult = 0; else result = -1;\n");

}

compareBuilder.Append("\t\t\tif (result !=0) return " +

(desc ? "-" : "") + "(result);\n");

}

compareBuilder.Append("\t\t\treturn result;");

compareCode = compareCode.Replace("[TypeName]",

typeName).Replace("[ComparerName]", comparerName)

.Replace("[CompareCode]", compareBuilder.ToString());

}

Listing 4: Compiling the dynamic comparer

ICodeCompiler compiler = new CSharpCodeProvider().CreateCompiler();

CompilerParameters parameters = new CompilerParameters();

string primeAssemblyPath =

type.Assembly.Location.Replace(

"file:///", "").Replace("/", "\\");

parameters.ReferencedAssemblies.Add(primeAssemblyPath);

foreach (System.Reflection.AssemblyName asm in

type.Assembly.GetReferencedAssemblies())

parameters.ReferencedAssemblies.Add(this.GetAssemblyPath(asm));

parameters.GenerateInMemory = true;

CompilerResults compiled =

compiler.CompileAssemblyFromSource(parameters, compareCode);

if (compiled.Errors.HasErrors)

{

string message =

"Could not generate a comparer for '{0}'. Errors:\n";

for (int i = 0; i < compiled.Errors.Count; i++)

message += compiled.Errors[i].ErrorText + "\n";

throw new ApplicationException(

String.Format(message, dynamicTypeName));

}

Listing 5: Getting referenced assembly paths

protected string GetAssemblyPath(

System.Reflection.AssemblyName assembly)

{

string assemblyFullName = assembly.FullName;

if (assemblyPaths.Contains(assemblyFullName))

return Convert.ToString(assemblyPaths[assemblyFullName]);

else

{

string path = string.Empty;

System.Reflection.Assembly asm =

System.Reflection.Assembly.Load(assembly);

if (asm != null)

{

path =

asm.Location.Replace("file:///", "").Replace("/", "\\");

}

lock (assemblyPaths)

assemblyPaths.Add(assemblyFullName, path);

return path;

}

}

Listing 6: Test Sort method

public void Sort()

{

this.ReloadValues();

OrderCollection testGroup = new OrderCollection(),

controlGroup = new OrderCollection();

this.FillOrders(testGroup, this.selectStatement);

DateTime startSort = DateTime.Now;

testGroup.Sort(this.sortExpression);

Console.WriteLine("Time to Sort: " +

DateTime.Now.Subtract(startSort).TotalMilliseconds.ToString() +

" Milliseconds");

bool validSort = true;

if (this.timeOnly != "true") // validate the sort

{

this.FillOrders(controlGroup,

"SELECT H.* FROM (" + this.selectStatement +

") H ORDER BY " + this.sortExpression);

for (int i = 0; i < testGroup.Count; i++)

{

this.PrintValues(testGroup[i]);

if (!this.GetAreEqual(testGroup[i], controlGroup[i]))

{

if (validSort)

{

Console.WriteLine("Sort Failed On Item " + i.ToString());

this.PrintValues(controlGroup[i]);

validSort = false;

}

}

}

}

Assert.IsTrue(validSort);

}